Fraud Farms Are a Business. The Defense Has to Be Too.

A human fraud farm is not a technical problem. It’s a business problem.

The operation has labor costs, coordination overhead, tooling expenses and margin requirements. Workers get paid per account created, per bonus redeemed, per task completed. Coordinators manage throughput and respond to defensive friction. Operators make deliberate ROI decisions, deploying human workers, mobile device farms, or AI agents based on what generates the best return against each target.

When the target stops being profitable, the operation stops. Not because it was blocked. Because it wasn’t worth continuing.

That’s the insight that changes the entire defense model. The goal isn’t to detect fraud. It’s to make fraud unprofitable.

Why detection-first approaches fail economically

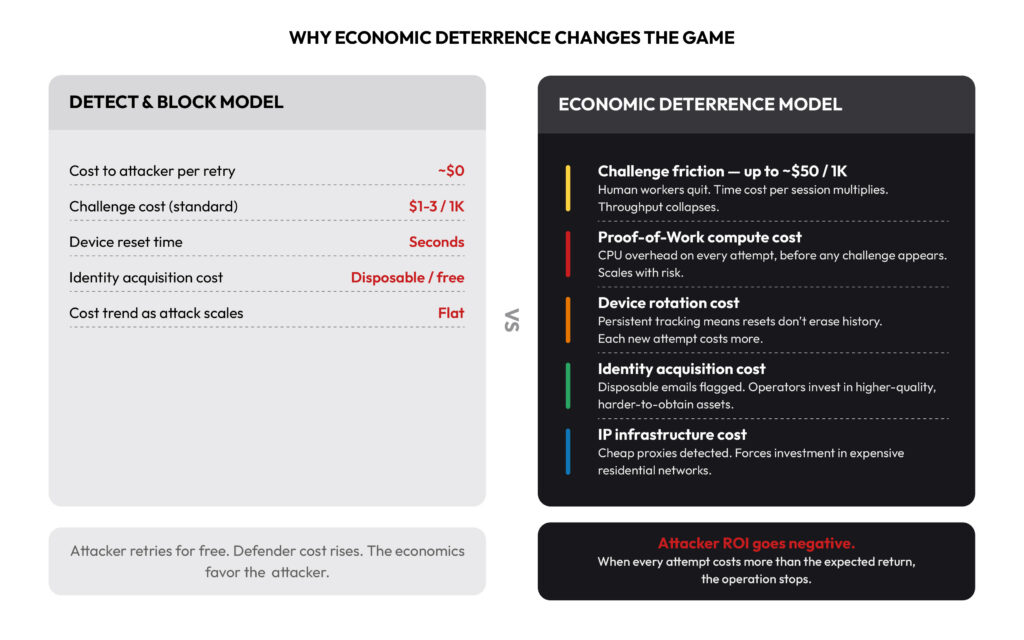

Most fraud defenses operate on a detect-and-block model: identify suspicious sessions, block them, update the rules, repeat. The problem isn’t that this approach fails to catch fraud. The problem is that it doesn’t change the economics of the attack.

Blocking is free for attackers. A blocked session costs them nothing. They rotate an IP, swap a browser profile, and try again. The cost of retrying is effectively zero. Meanwhile, the cost of detecting falls on the defender: more tooling, more tuning, more analyst time, more infrastructure. The economics favor the attacker structurally. Detection gets more expensive. Retry stays cheap.

Human fraud farms compound this problem because their cost-per-attempt is already higher than automated attacks because they’re paying real workers. But standard challenge-response tests, solved in seconds at fractions of a cent, barely register against that cost structure. The friction is real but manageable. The economics still work in the attacker’s favor.

The only defense that structurally changes this dynamic is one that makes every attempt cost the attacker more than the expected return.

What economic deterrence actually means in practice

Economic deterrence isn’t a single mechanism. It’s a compounding cost structure applied across every element of an attack operation.

Challenge friction accumulates across the workforce. When challenges are designed specifically to defeat human solvers (requiring genuine cognitive effort, not reflexive clicks, and scaling in difficulty for flagged sessions) the cost per account climbs. A worker who completes hundreds of tasks per hour under standard challenge-response conditions might complete only a handful under sustained, escalating friction. The per-account labor cost multiplies. The operation’s throughput collapses.

Field intelligence from attacker-facing solver marketplaces confirms this directly. On platforms where fraud farms source challenge-solving labor, Arkose Labs challenges are consistently the most expensive to solve, up to ~$50 per 1,000 versus $1–3 for standard alternatives. Human solver workers quit on Arkose challenges. They describe them as too difficult, too slow and not worth the pay. That’s not a marketing claim, it’s attacker-sourced evidence that the economics are working.

Proof-of-Work compounds the compute cost. Running silently in the background on every suspicious session, Proof-of-Work forces attackers to spend CPU cycles, and money, on every attempt, regardless of whether they reach a visual challenge. Across a large workforce, this overhead accumulates. It raises the floor cost of every session before a single worker engages.

Device tracking eliminates the reset advantage. One of the core economics of fraud farm operations is that workers can reset, rotate a proxy, switch a browser profile, use a fresh device, and start fresh. Persistent device identification changes that calculation. When device history follows workers across resets, the cost of each new attempt increases. The infrastructure investment required to stay ahead of detection grows.

Identity cost rises when cheap identities stop working. Email Intelligence flags disposable, bulk-generated and breach-associated email addresses at the point of registration, making low-cost identity acquisition ineffective. Operators who want to create convincing accounts have to invest in higher-quality identity material: aged email accounts, plausible address patterns, consistent behavioral history. The per-account cost of building the attack inventory rises before any fraud is attempted.

IP infrastructure cost escalates under pressure. When cheap datacenter proxies get detected, operators have to move to residential proxy networks, a meaningfully more expensive infrastructure. Every layer of detection that forces a higher-cost evasion technique raises the floor cost of running the operation.

The volatility data makes the case

Human fraud farms operators make explicit ROI decisions. They scale operations up when a target is profitable, and scale them down, or abandon the target entirely, when costs rise and returns fall. This is visible directly in attack pattern data: human fraud farms activity surges and collapses quarter-over-quarter, reflecting operators responding to defensive economics in real time.

This behavior is exactly what economic deterrence is designed to produce. The goal isn’t to block every individual attempt, it’s to make the sustained operation financially irrational. When the cost of attacking a platform consistently exceeds the expected return, operators redirect to softer targets. The attack doesn’t just slow down. It stops.

The AI evolution doesn’t change the model — it raises the stakes

As AI augments fraud farm operations by handling coordination, generating synthetic identities, executing attacks at machine scale, the “infinite willpower” problem becomes acute. AI agents don’t fatigue, don’t quit, and don’t respond to the psychological friction that makes challenges effective against human workers.

But AI agents aren’t immune to cost. They can probe indefinitely, until probing costs compute, every challenge consumes time, and every synthetic identity requires real investment to generate and maintain. Economic deterrence applies to AI-assisted operations by the same logic it applies to human ones: make every attempt cost more than the expected return, and the attack stops generating positive ROI.

The difference is that the cost threshold required to deter an AI agent is higher than the threshold that deters a human worker. That’s why the challenge technology has to keep evolving, and why classification matters. Knowing whether a session is human, automated bot, or AI agent changes the deterrence response that’s appropriate. The Observe → Classify → Act workflow gives fraud and security teams the policy control to respond to each threat type on its own terms, in real time.

What this looks like as a defense posture

Economic deterrence as a defense posture means asking a different question. Not: did we catch this session? But: is it still worth it for them to keep trying?

That question is answered across the full cost structure of the attack operation; challenge friction, compute cost, device tracking, identity cost, infrastructure cost, compounding with every attempt, across every worker, across every session. When the aggregate cost exceeds the aggregate return, the operation stops. Not because it was detected and blocked. Because it wasn’t worth running.

That’s the defense model that works against human fraud farms. And it’s the only one that holds as the threat continues to evolve.

Arkose Labs’ economic deterrence model is built to make fraud operations unprofitable — against human workers, automated bots and AI-augmented hybrid attacks. Learn how the Arkose Titan Platform protects your platform at arkoselabs.com.