Why Your Fraud Defenses Were Never Built for This

Most fraud and security teams discover their human fraud farm problem the same way: gradually, then all at once.

The signals are there in retrospect; slightly elevated fake account rates, promo budgets draining faster than acquisition numbers justify, a pattern of transactions that individually look clean but collectively don’t make sense. By the time the pattern is confirmed, the operation has already shifted. The workers have moved on. The accounts are in play. The damage is done.

This isn’t a detection failure in the conventional sense. The tools worked as designed. The problem is that they were never designed for this threat.

The assumption that breaks everything

The overwhelming majority of fraud detection infrastructure is built on a single foundational assumption: suspicious activity looks different from legitimate activity, and the way to protect against it is to identify and block the difference.

For automated attacks, that assumption holds. Bots leave machine signatures. They move too fast, too consistently, too predictably. Behavioral analytics catches the pattern. Rate limiting catches the volume. Device fingerprinting catches the infrastructure.

Human fraud farms are specifically engineered to invalidate every part of that assumption.

Workers are real humans on real devices. They generate authentic behavioral signals, genuine mouse movement, realistic keystroke dynamics, natural navigation patterns. They solve challenge-response tests that were designed to distinguish humans from machines, because they are humans. They use residential IP addresses that pass reputation checks. They operate at low enough volume per worker that velocity thresholds don’t trigger.

The individual session is indistinguishable from a legitimate consumer. That’s not a flaw in the operation, it’s the design. human fraud farms exist specifically because human behavior can’t be caught by the tools built to detect automated behavior.

Where each defense layer breaks down

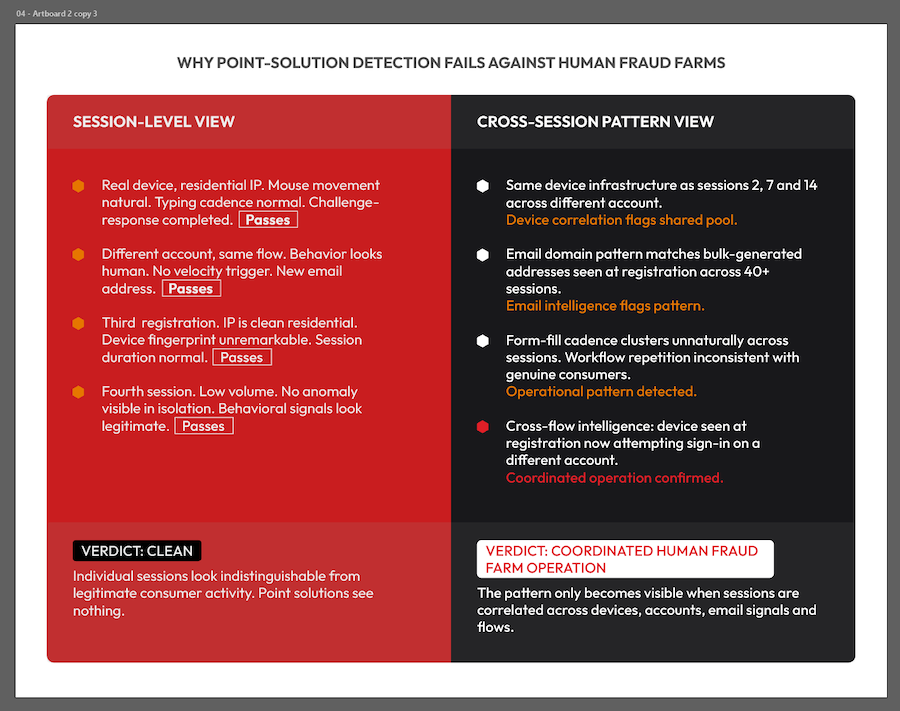

Behavioral biometrics identifies scripted, machine-generated interaction patterns. Human workers don’t generate those. They produce the genuine biometric signals that legitimate consumers produce. Behavioral biometrics can identify the operational patterns of coordinated farm activity like repetitive workflows, unnatural session cadences, but only when sessions can be correlated across accounts. Without that cross-session visibility, individual sessions pass clean.

Challenge-response technology is explicitly designed to filter humans from bots. Against fraud farms, it does exactly that, and passes the human workers through. Standard challenge-response tests are solved by the workers in seconds, hundreds of times per hour, at negligible cost. They’re not a defense against human fraud; they’re a small inconvenience that fraud farm economics absorb easily.

IP reputation and rate limiting fail for similar reasons. Workers use residential proxy networks specifically to appear as legitimate consumer traffic. Volume is distributed across dozens or hundreds of workers, keeping individual session rates well below the thresholds that would trigger an alert. The aggregate attack is massive. Each individual signal is invisible.

Point solutions at individual flows give fraud farms their most exploitable gap. An operator who encounters friction at registration simply routes activity through a different flow; sign-in, payment, account update. Each point solution sees a fragment of the operation. None sees the whole. The seams between solutions are where human fraud farms operators concentrate.

The pattern problem

Fraud farm detection ultimately requires answering a question that individual session analysis can’t answer: is this consumer real, or is this one of a coordinated operation?

That question can only be answered by correlating signals across sessions, devices, accounts, flows, and time. The same device showing up across multiple accounts. The same infrastructure characteristics across seemingly unrelated sessions. Email addresses following patterns consistent with bulk generation. Behavioral signatures that individually look normal but cluster in ways legitimate consumers don’t cluster.

This is also why human fraud farms volatility makes detection so difficult. Hostile actors rapidly scale operations up or down based on defensive countermeasures and profit opportunities. By the time a pattern is confirmed in historical data, the operation has adapted. Static rules and signature-based detection are perpetually behind.

The Arkose Labs network data reflects this directly. Human fraud farms malicious traffic surged significantly in one quarter, plummeted in the next, then surged again — reflecting operators making active ROI decisions, shifting between attack mechanisms, targeting different platforms as defenses tighten. The operation is adaptive by design. Detection-first approaches chase it. Economic deterrence makes the target not worth attacking.