Blog

Your SMS Verification Flow Is a Revenue Stream for Fraud Farms

The Economics of Fraud Have Changed. Here’s Why.

Fraud Farms Are a Business. The Defense Has to Be Too.

The Agentic AI Security Category Is Converging on the Wrong Answer

Why Your Fraud Defenses Were Never Built for This

The Attack Runs Itself: What Agentic AI Fraud Actually Looks Like

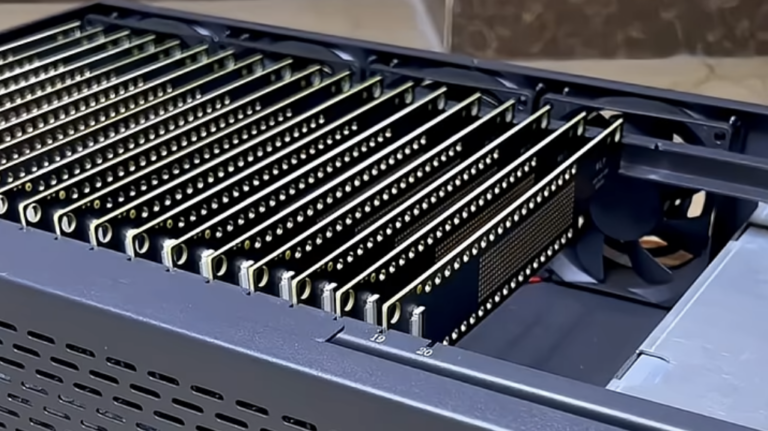

The Fraud Farm Threat Has Evolved. Your Defenses Haven’t.